When Architecture Becomes the Problem

Over-architecture is rarely deliberate. It builds through resume-driven engineering, vendor influence, and big-tech pattern copying, until the architecture exceeds the problem it was meant to solve.

Related Lab Tool

Try the interactive decision model for this topic.

A team needs a simple internal application.

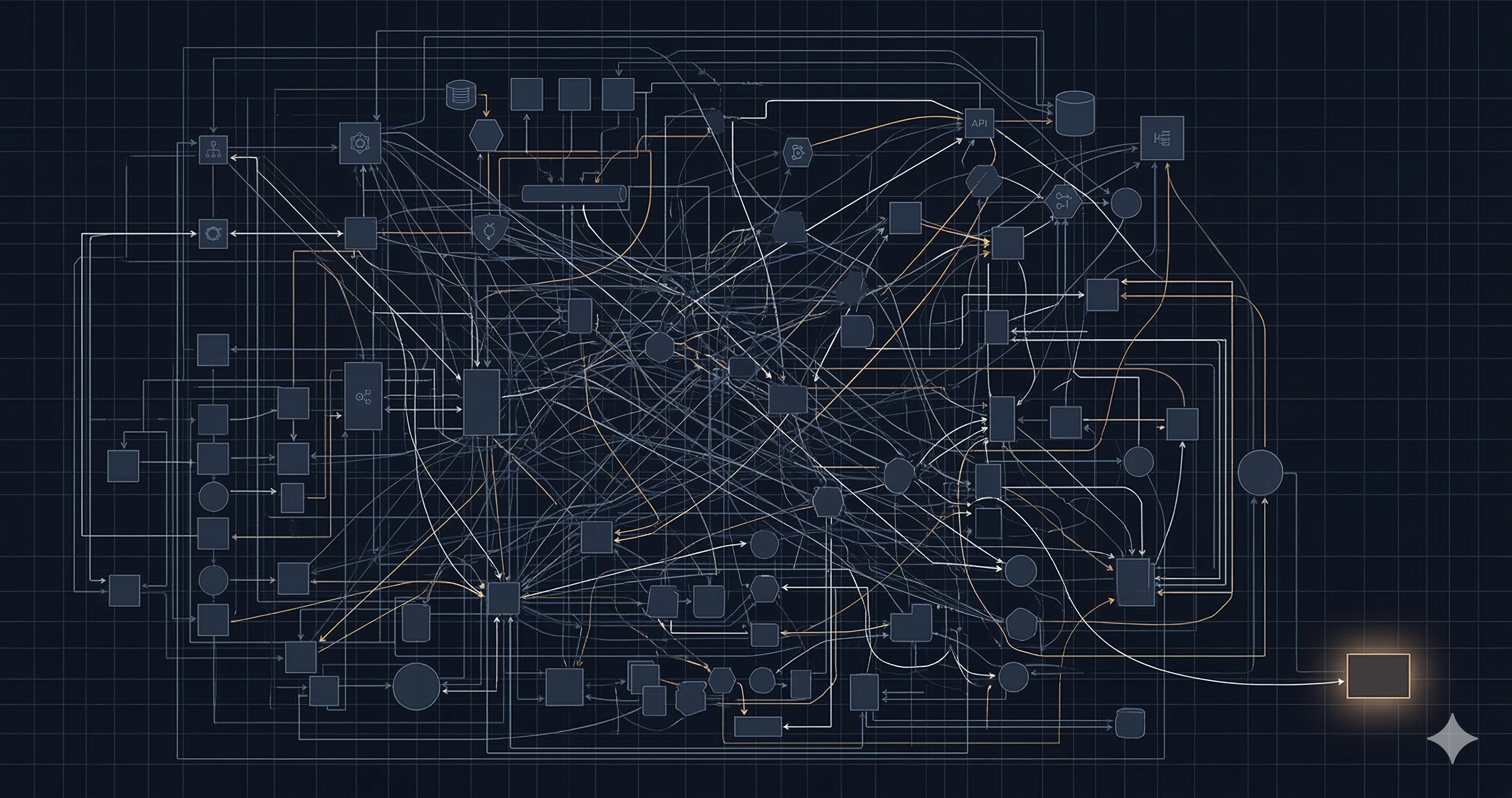

Six months later the architecture looks like this:

- Microservices (18 services)

- Event streaming (Kafka)

- Kubernetes with a service mesh

- Three databases

- A feature flag platform

- A dedicated observability stack with distributed tracing

The system supports 500 users. It handles internal support tickets. It has no SLA requirement above “best effort.”

The architecture is more complex than the problem.

The Problem

This is not unusual. Over-architecture builds up quietly, and it rarely happens because engineers made bad decisions. It happens because of how good engineers make decisions.

There are five recurring causes.

Resume-driven engineering. Kubernetes looks better on a CV than “we ran it on a single VM.” This is not cynicism; it is a real incentive misalignment between what benefits an engineer’s career and what benefits the system.

Vendor influence. Cloud providers and platform vendors make it easy to provision new capabilities. The cost of adding a service is low; the cost of removing one is not apparent until later.

Big-tech pattern copying. Netflix decomposed into microservices to solve Netflix problems. Amazon adopted event streaming to solve Amazon problems. A 10-engineer team copying those patterns inherits the operational complexity without the scale that justifies it.

Fear of scalability bottlenecks. Teams over-provision for growth that may never come. The assumption is that it is easier to build for scale upfront than to retrofit later. In most cases, the opposite is true. Premature scale investment is expensive, and the requirements usually change before the system reaches that scale.

Platform team pressure. Internal platform teams are incentivized to adopt and standardize on tooling. A team that installs Kubernetes internally creates demand for other teams to use it, regardless of whether those teams need it.

Can It Be Measured?

The question most teams do not ask is whether their architecture is proportional to the problem.

Not whether it is correct. Not whether it is scalable. Whether it is proportional.

A measurement model for over-architecture needs three inputs:

- System need: what does the workload actually require? Scale, criticality, reliability targets, regulatory burden, external integration complexity.

- Architecture complexity: what has been built? Service topology, async messaging, data store count, container orchestration, observability depth.

- Team capacity: can the team operate what has been built? Team size, operational maturity, on-call coverage.

The gap between architecture complexity and justified complexity is the over-architecture signal.

When complexity significantly exceeds what the scale, criticality, and team capacity can justify, the architecture is not a capability; it is a liability.

The Tool

Here is a detector to make this measurable.

The Cloud Over-Architecture Detector takes context inputs (workload scale, business stage, reliability requirements, external integrations), maps them to a need index, and evaluates selected architecture components against that index. It produces an architecture complexity score, a cost inflation risk estimate, and a set of findings and recommendations.

After getting your score, you can generate an AI Assessment Brief. It produces a 4–5 sentence executive summary explaining the score, identifying the primary architectural drivers, describing the operational implications, and recommending a concrete simplification or validation step. The brief runs on Cloudflare Workers AI using Llama 3.1 8B at the edge, with no dedicated GPU cluster and no model management infrastructure. That choice is intentional. Running edge inference for a low-volume tool is itself an example of the same principle: use architecture proportional to the problem.

Reading the Inputs

The qualitative inputs shape the need index (the denominator in the complexity calculation).

- Monthly requests: Backend API or server calls, not page views or users. A single page load typically triggers 5–20 API calls depending on the application.

- Team size: Engineers actively operating and evolving the platform. Count the people who get paged when it breaks, not the full engineering org.

- Team maturity (1–5): 1 is a team encountering distributed systems for the first time. 3 has operated a service-oriented architecture in production. 5 has run Kubernetes or a service mesh and managed its failure modes directly.

- Business stage: Prototype means the product is pre-revenue or pre-product-market fit. Growth means it is generating revenue and scaling. Enterprise means it is a core operating asset with contractual reliability obligations.

- Reliability target: Standard is anything without a public SLA. High availability means 99.9% uptime as an operational target. Mission critical means 99.95%+ with contractual or safety implications.

- Regulatory burden: Low is no industry-specific compliance. Moderate is GDPR, SOC 2, or ISO 27001. High is PCI-DSS, HIPAA, or financial services regulation with direct audit exposure.

- External integrations: APIs your system calls or receives from outside your own infrastructure. A database is not an integration. A payment processor, CRM, identity provider, analytics pipeline, or third-party data feed is. A fully self-contained internal tool (with its own auth, its own data store, and no dependency on external services) legitimately has none. The ticketing portal in the example below qualifies.

An Example

Internal Customer Support Ticketing Portal. 500 users. Single region. No SLA requirement.

The team decomposed the system into separate services for authentication, ticket routing, notifications, and search. They adopted Kubernetes, Kafka, and a service mesh to future-proof the platform and emulate the patterns they had seen at larger companies. Each decision made sense in isolation. For 500 internal users with no SLA, none of them were necessary.

The form below is pre-loaded with this architecture. Adjust any input to see how the score changes in real time.

If you know your user count but not your request volume, a rough conversion: multiply daily active users by their average API calls per session (10–50 is typical for a web app), then by 30. A 500-user internal tool with moderate daily use generates roughly 0.1–1M requests per month. The example below uses 0.5M.

The score reflects a significant mismatch between what the workload requires and what has been built. Generate the AI Assessment Brief to see the specific combination driving the gap and the smallest intervention that would address it.

The proportional architecture for this system would have been a single modular monolith backed by Postgres, with a standard background job queue handling notifications and async ticket processing, deployed as a single service. That architecture supports 500 users, is operable by a small team, and does not require Kubernetes, Kafka, or a service mesh to function.

A Reflection

The model is not a perfect measurement. It uses heuristics. It does not know your team’s actual capability, your product roadmap, or your contractual constraints. There are cases where a high score is entirely justified: mission-critical workloads, high-compliance environments, teams with genuine operational maturity running at enterprise scale.

But the model forces a question most teams do not ask:

Is this architecture proportional to the problem?

Not in principle. In practice, right now, with this team, for this workload.

That question is rarely part of architecture review. Teams assess whether a system can scale. They assess whether it is secure. They assess whether it follows standards. They do not often assess whether its complexity is justified by what it actually needs to do.

That gap is where over-architecture lives.

The same applies to the findings and recommendations. They are generated from a rule-based model that evaluates inputs against known cost and complexity patterns, not from static analysis of your actual codebase or deployment topology. A finding that flags Kubernetes as disproportionate for a four-person team is accurate as a statistical pattern. It is not accurate if your team specialises in Kubernetes operations and manages it as a core competency. The model surfaces the structural question. Your team answers it.

Try It

The Cloud Over-Architecture Detector is at sarfarajey.com/lab/cloud-over-architecture-detector.

Set your workload context, select the components in your architecture, and read the findings. If the score is above 50, the system has more architecture than the problem requires.

Generate the executive brief and use it to start a conversation with your team.