AI Systems Are Quietly Exploding Architecture Complexity

AI features appear simple on the surface but introduce hidden architectural weight: new systems, pipelines, and governance gaps that compound before the project has a name. Here's how to measure it before it becomes unmanageable.

Related Lab Tool

Try the interactive decision model for this topic.

Your product team asks for a customer support AI assistant. It sounds like a chat widget. A few API calls. Two tickets. Maybe a sprint.

Then you start designing the architecture.

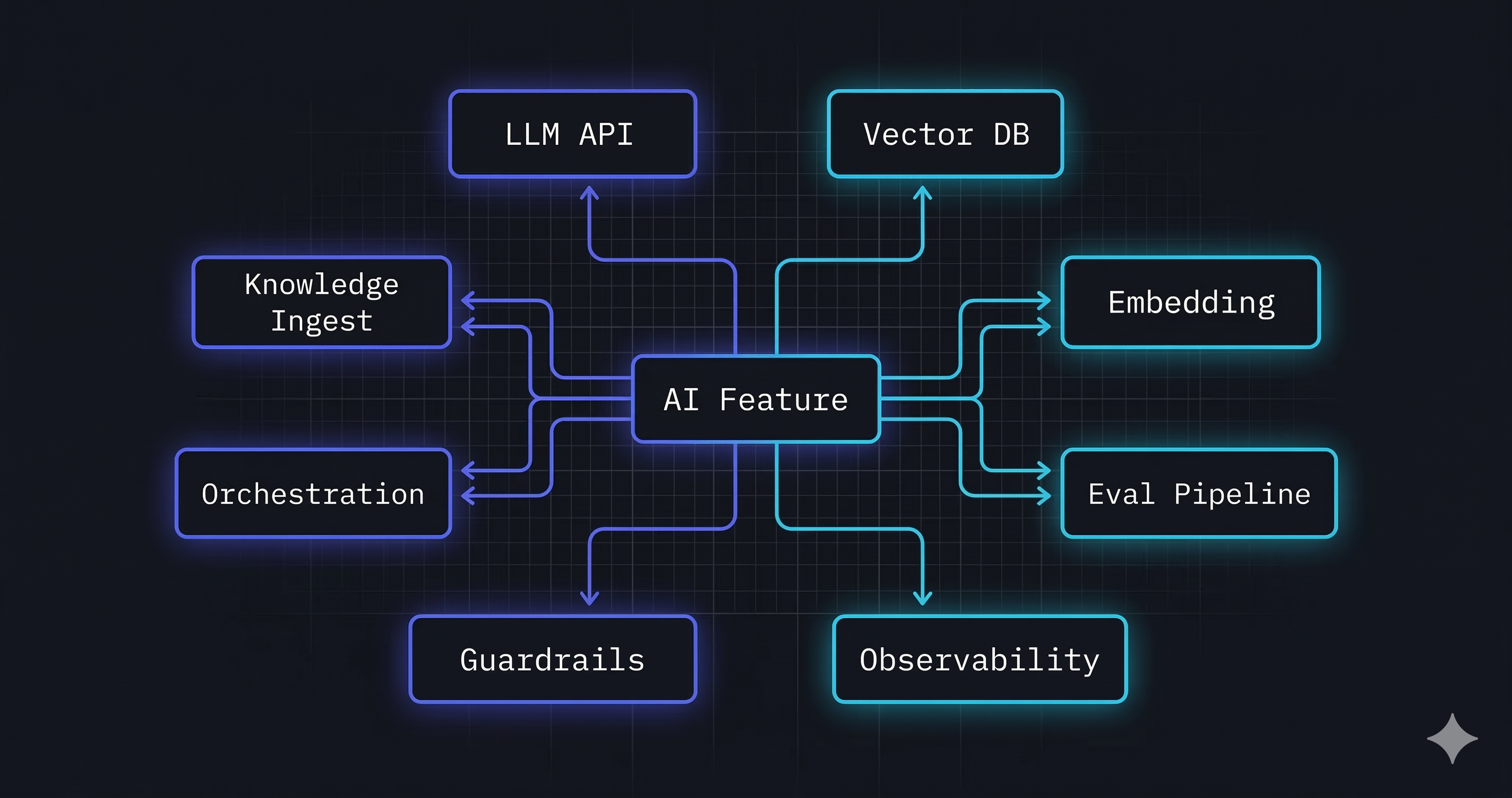

Once the design session starts, the component list grows fast:

- LLM provider API

- Embedding service

- Vector database

- Knowledge ingestion pipeline

- Prompt orchestration layer

- Guardrail service

- Model evaluation pipeline

- Model versioning system

- Observability stack

Each of those nine components brings its own integration surface, failure mode, and vendor contract. None of that was in the original requirement.

That chat widget now has the architectural footprint of a mid-sized data platform. And you committed to it before measuring a single complexity dimension.

It’s not an edge case. This pattern shows up in nearly every enterprise AI initiative.

Why AI Systems Multiply Complexity

Traditional enterprise applications have bounded complexity. A CRM integration adds one API, one data domain, one vendor. You can reason about it linearly.

AI systems don’t work that way. Each AI capability introduces a cluster of dependencies that cascade outward.

Model orchestration adds runtime state management that doesn’t exist in request/response systems. Prompts are not code. They behave differently across model versions, temperature settings, and context window constraints. Even 200k-token windows don’t eliminate the need to control what enters them. Orchestrating multiple models or agent steps multiplies this surface area.

RAG pipelines (Retrieval Augmented Generation) are deceptively complex. RAG sounds simple until you build it. Embedding models must match retrieval models, chunking strategies affect answer quality, retrieval latency compounds with LLM latency, and knowledge freshness requires continuous ingestion pipelines.

Vector storage introduces a new data persistence layer with its own indexing strategies, consistency models, and operational overhead. It doesn’t fit cleanly into existing data governance frameworks because it stores semantic meaning, not structured records.

Model governance is architecturally novel. You need to track which model version produced which output, reproduce results for audit purposes, and manage prompt templates as versioned artifacts. None of your existing deployment pipelines were designed for this.

Evaluation pipelines must run continuously, not just at release time. Model behavior drifts. An embedding model silently updated by your vendor changes vector representations, and retrieval quality degrades without any alerts. Without automated evaluation infrastructure, you won’t know until a customer complains.

Cost management is an architectural constraint, not a billing concern. LLM inference cost scales with token volume. A poorly designed prompt that retrieves too much context can increase per-request cost by 10×. This requires architectural controls at the orchestration layer.

Observability for AI systems is harder than for traditional APIs. Latency has three contributing layers (retrieval, embedding, generation), failure modes are probabilistic not deterministic, and quality metrics require human-in-the-loop or automated LLM-judge evaluation.

What you end up with is an architecture that looks simple from the outside but carries compounded integration load, fragmented data domains, and governance gaps that grow with every model update.

A Real Enterprise Example

Imagine a team building a Customer Support AI Assistant for an enterprise. The product requirement: deflect common support tickets by giving customers accurate answers from the company’s knowledge base.

The functional scope seems narrow. The architecture that emerges is not.

Architecture components required:

| Component | Role | Example tools |

|---|---|---|

| Frontend application | Conversational UI, session management | React, Next.js |

| API Gateway | Rate limiting, auth, routing, cost controls | Kong, AWS API Gateway |

| LLM Provider | Response generation | OpenAI, Anthropic, Google Gemini |

| Embedding Service | Converts queries and documents to vector representations | OpenAI Embeddings, Cohere Embed |

| Vector Database | Stores and retrieves semantically similar document chunks | Pinecone, Weaviate, pgvector |

| Knowledge Ingestion Pipeline | Ingests from CRM (e.g., Salesforce), chunks, embeds, and indexes knowledge base documents | LangChain, LlamaIndex |

| Model Evaluation Service | Automated quality scoring, regression detection | LangSmith, Ragas |

| Guardrail Service | Filters harmful inputs/outputs, enforces policy constraints | Guardrails AI, LlamaGuard |

| Observability Stack | Latency, cost, quality, and error monitoring | Datadog, LangSmith |

Nine components. On day one, each is managed by a different team, a different vendor, or a different operational runbook.

The problems that follow are architectural, not technical:

- The knowledge ingestion pipeline and the vector database have separate operational owners. When document freshness degrades, nobody owns the end-to-end SLA.

- The embedding model and the retrieval model are implicitly coupled. Swapping one vendor for cost reasons silently breaks the other without any compilation error or test failure.

- Guardrails and the LLM provider both process the full prompt. When a guardrail fires, debugging requires tracing across two vendor APIs, two logging systems, and two billing accounts.

- Cost attribution is impossible without architectural instrumentation at every layer.

The product spec doesn’t mention any of it. The architecture team finds out during the design session.

Measuring the Complexity

Architecture decisions made without measurement are decisions made by assumption.

For context: a traditional feature (3 components, 1 data store) might yield a Complexity Score of 6. An AI feature (10 components, 4 data stores) easily yields a Complexity Score of 20+. That gap is the architectural debt you are committing to before a line of code is written.

The Architecture Complexity Index, available in the Lab, is a tool designed to quantify this problem before it becomes irreversible.

It evaluates seven weighted complexity drivers that enterprise architects encounter across system designs:

| Dimension | Weight | What it captures |

|---|---|---|

| Integration load | 20% | External APIs, interfaces, and integration surface area |

| Service surface | 18% | Number of services and their operational footprint |

| Cloud entropy | 14% | Multi-cloud provider spread and coordination overhead |

| Deployment volatility | 16% | Release cadence and deployment coordination complexity |

| Vendor footprint | 16% | External vendor platforms and their governance burden |

| Data sprawl | 16% | Number of data domains and cross-domain coupling |

| Governance maturity | offset | Architecture governance that counteracts raw complexity |

The tool gives architects a number before they commit to a direction, so tradeoffs get evaluated with evidence rather than instinct.

If your AI architecture scores in the High or Critical range before you’ve written a line of code, you have an architectural problem to solve, not an implementation problem.

Running the Customer Support AI Assistant Through the Tool

The form below is pre-loaded with the Customer Support AI Assistant architecture described above. Adjust any input to see how architectural decisions change the score in real time.

What a score of 57 means in practice:

A score of 57 (High tier) means this architecture carries meaningful systemic risk before the first deployment. The 1.9× debt multiplier indicates that every hour of development effort will require approximately 1.9× the effort to maintain, extend, or refactor. Not because the team wrote bad code. The architectural configuration itself generates friction.

The top drivers (integration load, vendor footprint, and service surface) tell you exactly where the architectural investment should go: reduce vendor count where possible, consolidate integration surface, and establish a governance layer that spans all nine services before the system scales.

What changes the score:

- Raising governance maturity from 2 to 4 reduces the score by 12 points (from 57 to 45, moving from High to Moderate tier). It’s the highest-leverage change available, and the hardest to act on, because it requires org change, not a config tweak.

- Reducing from 2 cloud providers to 1 drops cloud entropy from 55 to 20, saving approximately 5 points.

- Consolidating the embedding service and vector database into a single managed RAG platform cuts both service count and vendor count simultaneously, saving approximately 4 points.

Three targeted changes, made before the first service is deployed, shift this architecture from High to Moderate, cutting technical debt exposure roughly in half. Adjust the values above to model your own scenario.

Lessons for Architects

Treat AI as a platform capability, not a feature.

Adding AI to an enterprise is not equivalent to adding a feature. It introduces a new technical platform that must be owned, governed, and evolved at the platform level. The nine-component architecture above doesn’t fit into a sprint. It requires a product team, an infrastructure owner, a data owner, and a governance framework that spans all of them. Scoping it as a feature is how organizations end up with nine orphaned services and no one accountable for the integration graph.

AI complexity hides in integration layers, not in the model.

The LLM itself is a black box API call. The complexity lives in everything around it: the pipelines that feed it, the systems that evaluate it, the governance structures that constrain it. Most architecture reviews focus on model selection. The integration graph is where the real risk accumulates.

Orchestration frameworks like LangChain, LlamaIndex, or platform-native agent services (Bedrock Agents, Azure AI Foundry) create implicit dependencies between tools, prompts, and model versions. When any one component changes, the orchestration behavior can change in unpredictable ways. It’s a category of architectural coupling that doesn’t map cleanly to service mesh patterns or API contract testing, and most teams don’t discover it until something breaks in production.

Data pipelines outlast every model and every vendor.

Models will change. Vendors will change. The knowledge data flowing through your ingestion pipeline, the evaluation datasets that define quality, and the conversation history that informs future improvements will outlast every other component in this stack. Design your data pipeline as a first-class architectural concern, not as a feature of the vector database.

Governance is the highest-leverage investment, and the most deferred.

The ACI tool’s scoring confirms this quantitatively: governance maturity has the largest single-factor impact on reducing complexity score. An architecture review board, documented ADRs (Architecture Decision Records), and clear ownership boundaries for AI components reduce complexity by counteracting the raw integration and service surface area numbers. Teams defer it because it feels like overhead. The complexity score shows exactly what that deferral costs.

The Architecture Complexity Index is a pre-implementation tool. Run it when you have a design on the whiteboard, not after you have nine services in production. The cost of refactoring a High-complexity AI architecture after deployment is measured in quarters and organizational reorgs, not bug tickets.

Try It With Your Architecture

The Architecture Complexity Index is available in the Lab as a free, browser-based tool. No account required.

Enter the dimensions of your current or proposed architecture. Review the score, the risk tier, the technical debt multiplier, and the driver breakdown. Use the output as a structured input to your architecture review process.

If the score comes back High or Critical, that’s useful information to have before you’ve made commitments that are difficult to reverse.

Architecture decisions compound. Running the numbers before the first sprint is cheaper than running them after the sixth.

Learn more about architecture decision making on the Architecture page, or read about the philosophy behind this site on the About page.